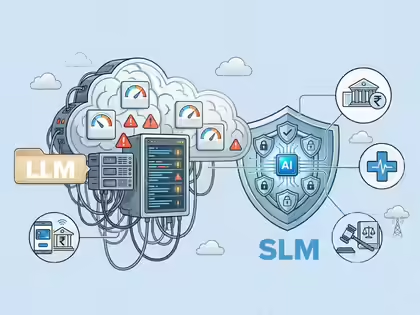

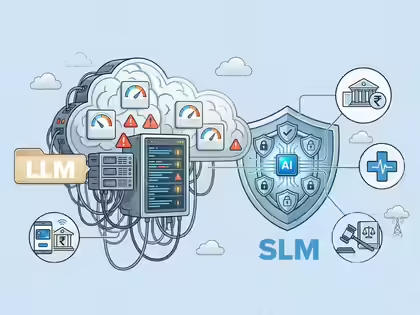

The rise of small language AI models in India reflects a clear cost-first innovation strategy, where efficiency and affordability are prioritised over scale. Startups and enterprises are increasingly adopting compact AI systems to solve real-world problems without the heavy infrastructure costs of large models.

The rise of small language AI models is becoming a defining trend in India’s artificial intelligence ecosystem. Instead of competing directly with large global models, Indian companies are focusing on building lightweight, task-specific AI solutions that align with local market constraints and business realities.

Why Small AI Models Are Gaining Ground in India

Small language AI models are gaining traction primarily due to cost and accessibility advantages. Large language models require massive computing power, expensive GPUs, and extensive datasets, making them difficult to deploy at scale for most Indian companies.

In contrast, smaller models are designed to perform specific tasks such as customer support automation, vernacular translation, or document processing. These models require fewer resources, making them more practical for startups and mid-sized businesses.

This approach aligns with India’s broader digital economy, where businesses often operate under tight budgets and need solutions that deliver immediate return on investment. As a result, efficiency is becoming more valuable than raw computational scale.

Cost-First AI Strategy Shapes Startup Innovation

India’s cost-first innovation strategy is driving startups to rethink how AI products are built and deployed. Instead of training models from scratch, many companies are fine-tuning existing open-source models for specific use cases.

This reduces development time and infrastructure costs while maintaining acceptable performance levels. For example, fintech startups are using smaller AI models for credit scoring, fraud detection, and customer onboarding processes.

The focus is on solving high-impact problems rather than building general-purpose AI systems. This targeted approach allows startups to scale faster within niche markets and deliver measurable business outcomes.

Vernacular AI and Bharat Use Cases Lead Adoption

One of the strongest drivers behind the rise of small language AI models is the demand for vernacular AI solutions. India’s linguistic diversity requires models that can understand and process multiple regional languages effectively.

Smaller AI models are better suited for this purpose because they can be trained on focused datasets specific to individual languages or regions. This enables applications such as voice assistants, chatbots, and content platforms tailored for non-English users.

In Tier-2 and Tier-3 markets, where internet adoption is growing rapidly, these solutions are critical. Businesses are using AI to engage customers in local languages, improving user experience and expanding market reach.

Infrastructure Constraints Encourage Efficient AI Development

India’s infrastructure realities also play a key role in shaping AI strategies. High-performance computing resources are still limited and expensive compared to global markets. This makes large-scale AI deployments less viable for many organisations.

Small language AI models offer a practical alternative by reducing dependency on expensive cloud infrastructure. They can often be deployed on edge devices or low-cost servers, enabling wider adoption across industries.

This is particularly relevant for sectors like agriculture, healthcare, and logistics, where connectivity and computing resources may be limited. Efficient AI models ensure that technology can be applied even in resource-constrained environments.

Enterprise Adoption of Lightweight AI Solutions Increases

Large enterprises in India are also embracing smaller AI models as part of their digital transformation strategies. Instead of relying solely on large, general-purpose AI systems, companies are integrating multiple specialised models into their workflows.

This modular approach improves efficiency and reduces operational costs. For instance, banks are deploying AI models for specific functions such as document verification, risk assessment, and customer interaction.

The shift toward lightweight AI solutions is also helping enterprises maintain better control over data privacy and compliance. Smaller models can be deployed within secure environments, reducing reliance on external infrastructure.

Global Implications of India’s AI Approach

India’s focus on small language AI models is not just a local trend. It has broader implications for the global AI landscape. As businesses worldwide look for cost-effective AI solutions, the demand for efficient and scalable models is increasing.

India’s approach demonstrates that innovation does not always require large-scale resources. By prioritising efficiency and practical use cases, companies can achieve significant impact with limited investment.

This model of innovation is particularly relevant for emerging markets, where similar constraints exist. It positions India as a key player in developing accessible and inclusive AI technologies.

Takeaways

Small language AI models are driving cost-efficient innovation in India’s AI ecosystem

Startups are focusing on task-specific solutions instead of large general-purpose models

Vernacular AI use cases are accelerating adoption in Tier-2 and Tier-3 markets

Infrastructure constraints are encouraging the development of efficient, scalable AI systems

FAQs

What are small language AI models?

They are lightweight AI systems designed for specific tasks, requiring less computing power than large models.

Why are they popular in India?

They are cost-effective, easier to deploy, and better suited for local market needs.

How do they benefit startups?

They reduce development costs and enable faster deployment of AI-driven products.

Can small AI models compete with large models?

They may not match general capabilities but are highly effective for targeted applications.

Leave a comment